In recent years, there has been significant progress in the field of nanomaterial-based flexible sensors (NMFSs) in the context of virtual reality (VR) and metaverse technologies. Researchers from Changchun University of Science and Technology (CUST) and City University of Hong Kong (CityU) have conducted a survey on the fabrication of these sensors and the methods of interaction with VR applications. The review, published in the International Journal of Extreme Manufacturing (IJEM), explores the advancements in NMFSs and their potential to revolutionize human-computer interactions in the future.

Nanomaterials, such as nanoparticles, nanowires, and nanofilms, have been widely incorporated into flexible sensors due to their unique properties and facile processing capabilities. These sensors possess high sensitivity, low power consumption, malleability, reliability, and are easily fabricated on a large scale. Compared to traditional rigid sensors, NMFSs offer significant advantages in terms of their ability to closely conform to the shape of human skin or clothing, making them more lightweight and comfortable for users. These characteristics make NMFSs an ideal choice for applications in VR and metaverse systems.

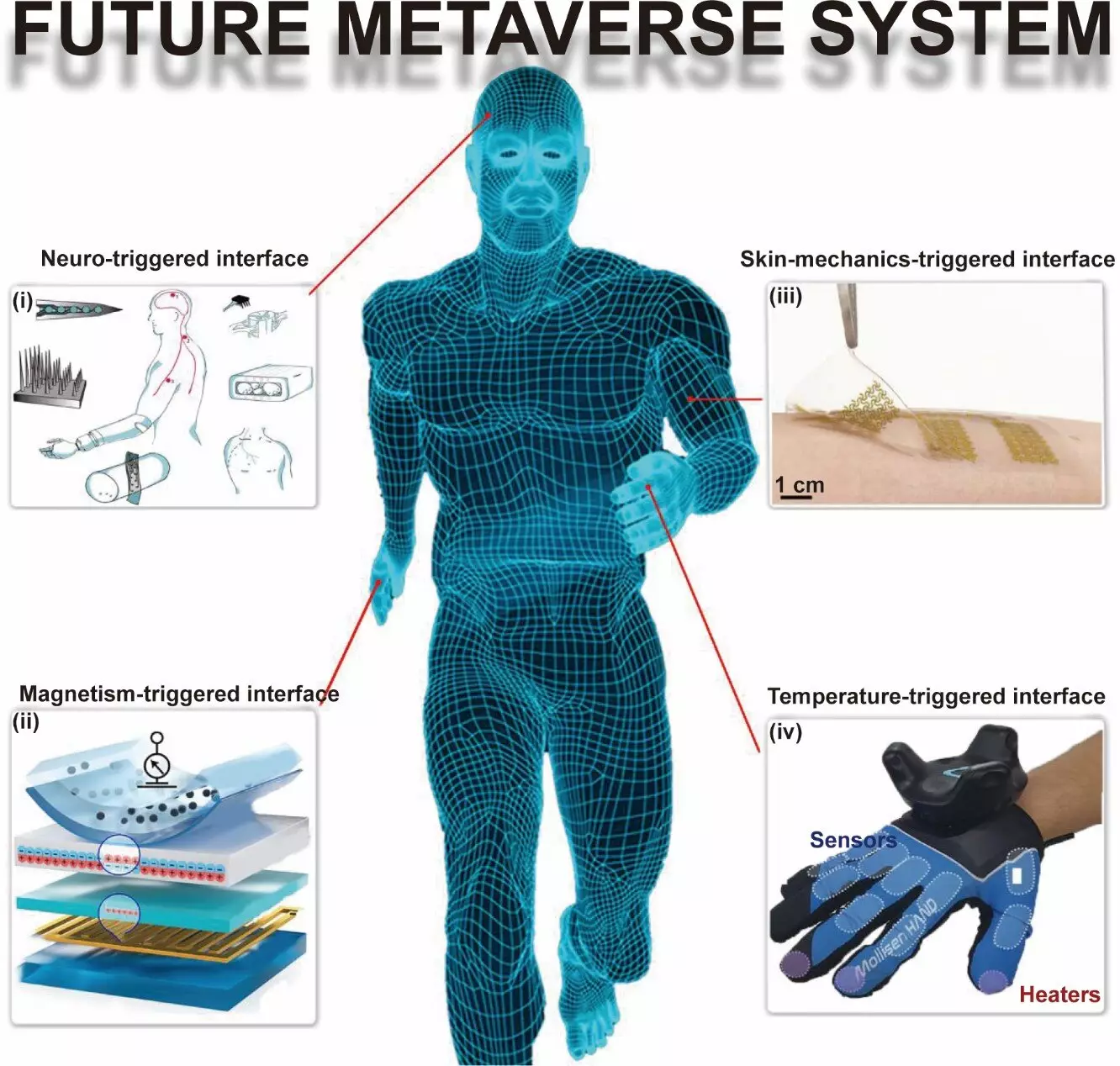

The review discusses various triggering mechanisms for interaction between NMFSs and VR applications. These mechanisms include:

– Skin-mechanics-triggered interfaces

– Temperature-triggered interfaces

– Magnetically triggered interfaces

– Neural-triggered interfaces

By utilizing these mechanisms, NMFSs can effectively capture and interpret physical and physiological information from the human body. This data is crucial for enabling seamless interaction between users and virtual environments.

The Role of Machine Learning

Machine learning has emerged as a promising tool for sensor data processing and controlling avatars in the metaverse. With the help of machine learning algorithms, NMFSs can accurately analyze and interpret the sensor data collected from the human body. This enables the metaverse/virtual reality world to respond in real-time to the user’s movements, providing a more immersive and interactive experience. The integration of machine learning and NMFSs holds great potential for advancing the field of VR and metaverse technologies.

Applications of Nanomaterial-based Flexible Sensors

The collaborative team from CUST and CityU is actively exploring different functional nanomaterial sensors for applications in virtual reality. These sensors have the potential to revolutionize the VR experience by enabling the detection and monitoring of various physical and physiological parameters. For example, NMFSs can sense skin vibrations, facial expressions, muscle activities, and limb motions. By accurately capturing and interpreting this data, VR systems can provide users with a more realistic and natural experience, enhancing their immersion in the virtual environment.

The advancements in nanomaterial-based flexible sensors have opened up new possibilities for the future of virtual reality and metaverse technologies. The lightweight, flexible, and highly sensitive nature of these sensors makes them ideal for monitoring physical and physiological information in the human body. With ongoing research and development, it is expected that NMFSs will replace traditional rigid sensors in numerous human-computer-interaction applications. The integration of machine learning algorithms further enhances the capabilities of NMFSs, enabling more seamless interaction and immersive experiences in the virtual world. As researchers continue to explore the potential of NMFSs, the future of VR and metaverse technologies looks promising, with the potential to revolutionize how we interact with virtual environments.

Leave a Reply