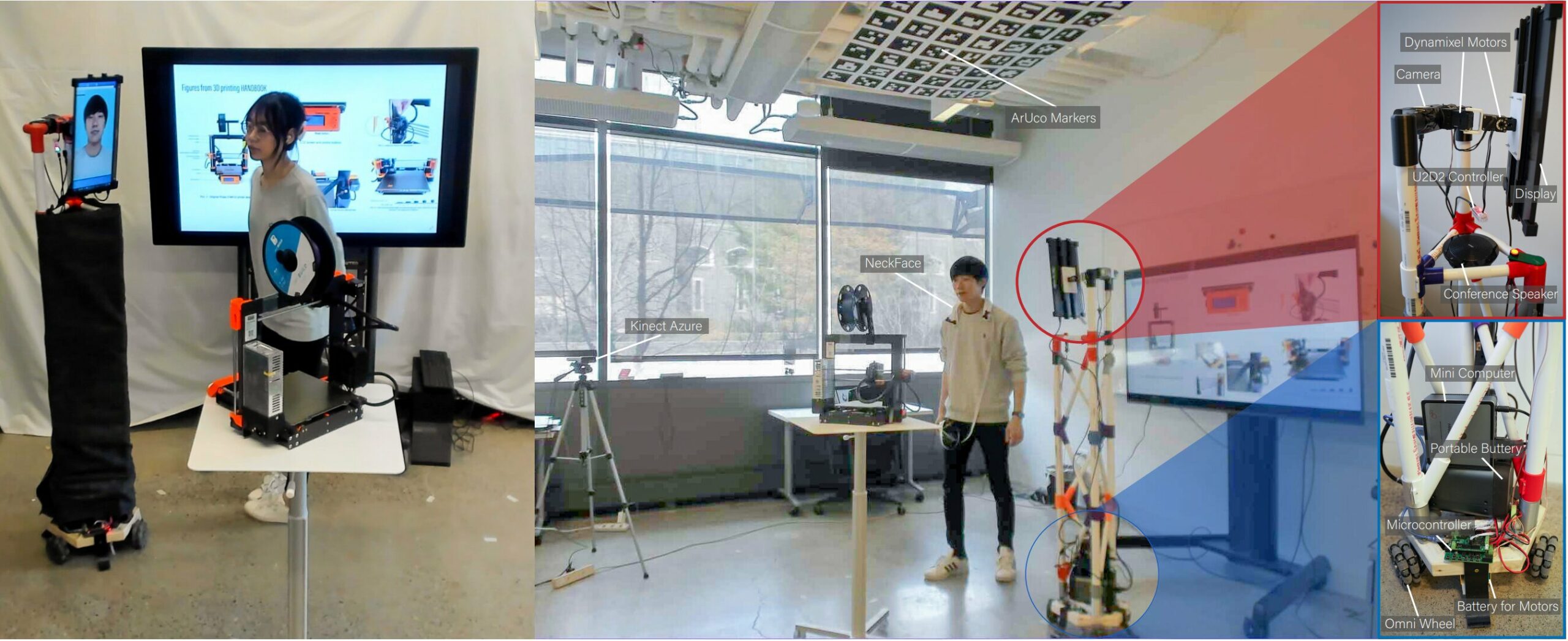

Cornell University researchers have developed a robot called ReMotion that can replicate a remote user’s movements in real-time, creating an automatic embodiment of the user’s physical space. This technology can convey key body language that is lost in standard virtual environments and allow for better, more dynamic interactions between remote collaborators.

How ReMotion Works

The robot stands at nearly six feet tall and features omnidirectional wheels for feet, a monitor for a head, and game-engine software for brains. The device automatically mirrors the remote user’s movements using NeckFace, another Cornell-made device that tracks head and body movements. The motion data is sent remotely to the ReMotion robot in real-time, allowing it to replicate the user’s movements and physical space.

Benefits of ReMotion

In a small study, participants reported feeling more connected to their remote teammates when using ReMotion compared to an existing telerobotic system. Participants also reported significantly higher shared attention among remote collaborators. Unlike other telepresence robots, ReMotion does not require manual steering, which can be distracting and take away from the task at hand. Additionally, virtual reality and mixed reality collaboration can require an active role from the user, and headsets may limit peripheral awareness.

Future Developments for ReMotion

Currently, ReMotion only works with two users in a one-on-one remote environment, and each user must occupy physical spaces of identical size and layout. However, ReMotion developers intend to explore asymmetrical scenarios, such as a single remote team member collaborating virtually via ReMotion with multiple teammates in a larger room. With further development, ReMotion could be deployed in virtual collaborative environments, classrooms, and other educational settings.

Leave a Reply